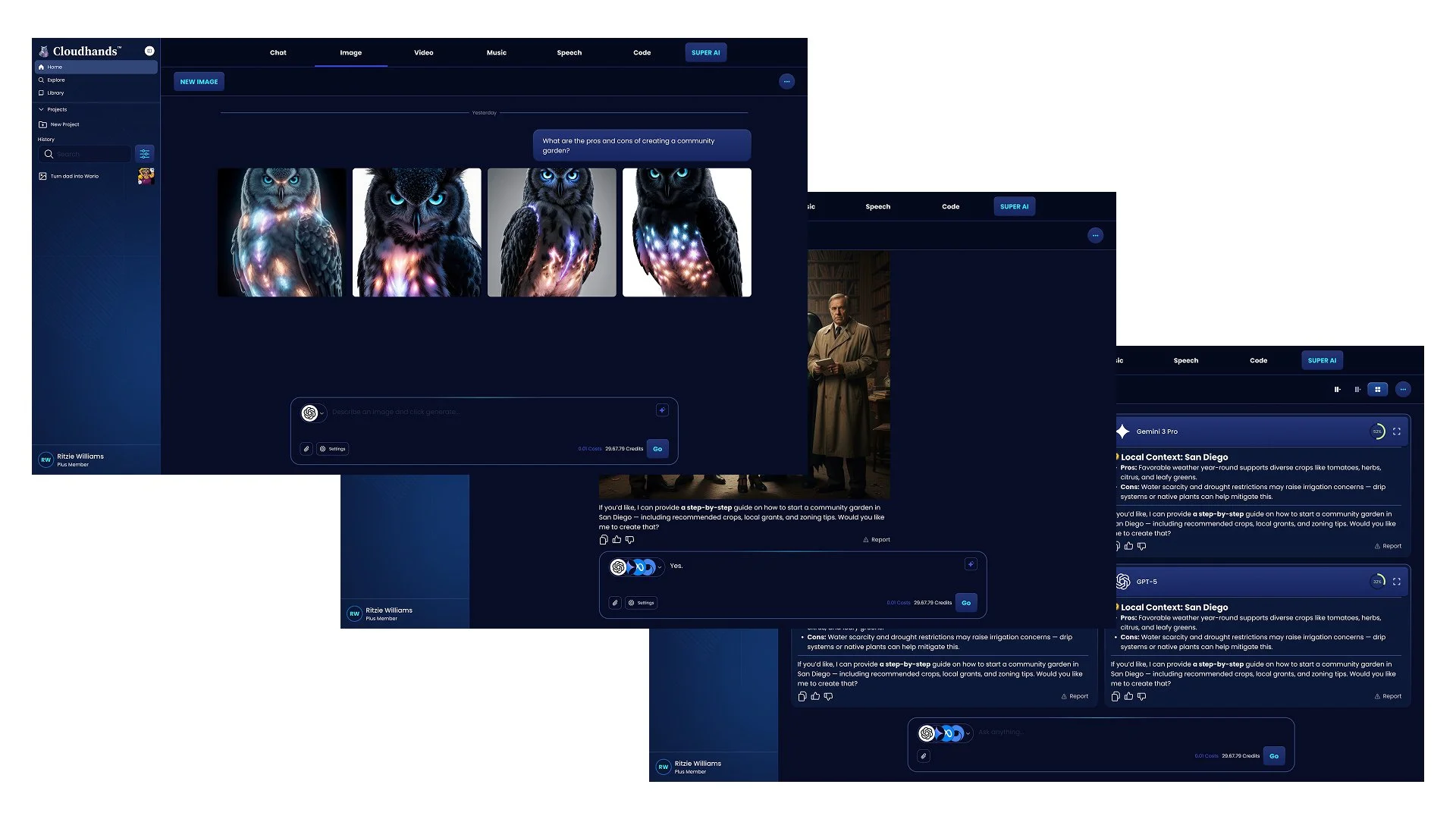

Cloudhands.ai

Cloudhands is a multi-modal AI platform designed to simplify how users interact with rapidly expanding AI capabilities. As the number of models and tools continues to grow, users face increasing friction in determining which AI to use for specific tasks and in effectively combining them into workflows.

The product was built to act as an orchestration layer across AI tools—enabling users to generate, edit, and optimize content across formats, including image, video, audio, text, and code, within a unified experience. Rather than navigating fragmented tools, Cloudhands centralizes these capabilities and introduces structured workflows to guide users toward better outcomes.

At its core, Cloudhands explores a shift from isolated AI tools toward coordinated systems—where users can leverage pre-defined workflows, or “agents,” to execute complex tasks more efficiently.

My Role

As Head of Design at Cloudhands, I led the end-to-end product design for a 0→1 multi-modal AI platform, working closely with product, engineering, and company leadership to define both the user experience and overall product direction.

My role extended beyond design into product strategy and process. In a highly ambiguous, early-stage environment, I helped define how the team operated by partnering with a project manager to establish an agile workflow, implement work tracking, and structure the product roadmap in ClickUp. This created alignment across design, product, and engineering, and enabled the team to move from exploration to execution more effectively.

I also played a key role in shaping the product itself—defining how Cloudhands approached AI orchestration, multi-modal workflows, and user interaction models across tools, assistants, and agents. I collaborated directly with stakeholders, including the company’s top investor, to align on vision, priorities, and tradeoffs.

While leading design and contributing to product direction, I remained hands-on—driving research, defining user flows, creating high-fidelity designs, and supporting implementation through beta.

Process

Documentation & Research

Problem

As the AI ecosystem rapidly expands, users face an overwhelming number of tools and models—each optimized for different tasks, such as image generation, writing, coding, or video creation. While individual tools continue to improve, the overall experience has become increasingly fragmented.

Users are often left asking:

Which model should I use for this task?

How do I move between tools to complete a workflow?

How do I get consistent, high-quality results without trial and error?

This fragmentation creates friction at multiple levels. At a basic level, users struggle to select the right tool. At a more advanced level, they lack a clear way to chain together multiple AI capabilities into cohesive workflows.

For Cloudhands, the core challenge was not just building another AI tool—but defining a system that could simplify decision-making, unify capabilities across modalities, and guide users from intent to outcome in a more structured and efficient way.

Predispositions

Before exploring solutions, I established a set of initial hypotheses to guide early product thinking. Given the ambiguity of the space, these predispositions helped frame where to focus and which directions to explore.

Users need guidance, not just access

Existing AI platforms provide powerful tools but place the burden of decision-making on users. I believed the product should actively guide users toward the right tools and workflows, rather than simply exposing capabilities.Workflows matter more than individual tools

Most real-world use cases require multiple steps—such as generating an image, refining it, and adapting it for different outputs. I assumed that enabling structured, multi-step workflows would be more valuable than optimizing isolated interactions.A unified platform can reduce friction

Switching between tools creates cognitive and operational overhead. I hypothesized that bringing multiple modalities (image, video, text, audio, code) into a single environment would simplify the user experience.Interaction models need exploration

It was unclear whether users would best engage with the product through tools, assistants, or more automated “agent”-based workflows. I treated this as a core area of exploration rather than a fixed assumption.Simplicity would be critical for adoption

While the underlying capabilities are complex, the experience needed to feel approachable. I believed the product should balance power with clarity to avoid overwhelming users.

These predispositions shaped early exploration and were continuously validated, refined, or challenged throughout the design process.

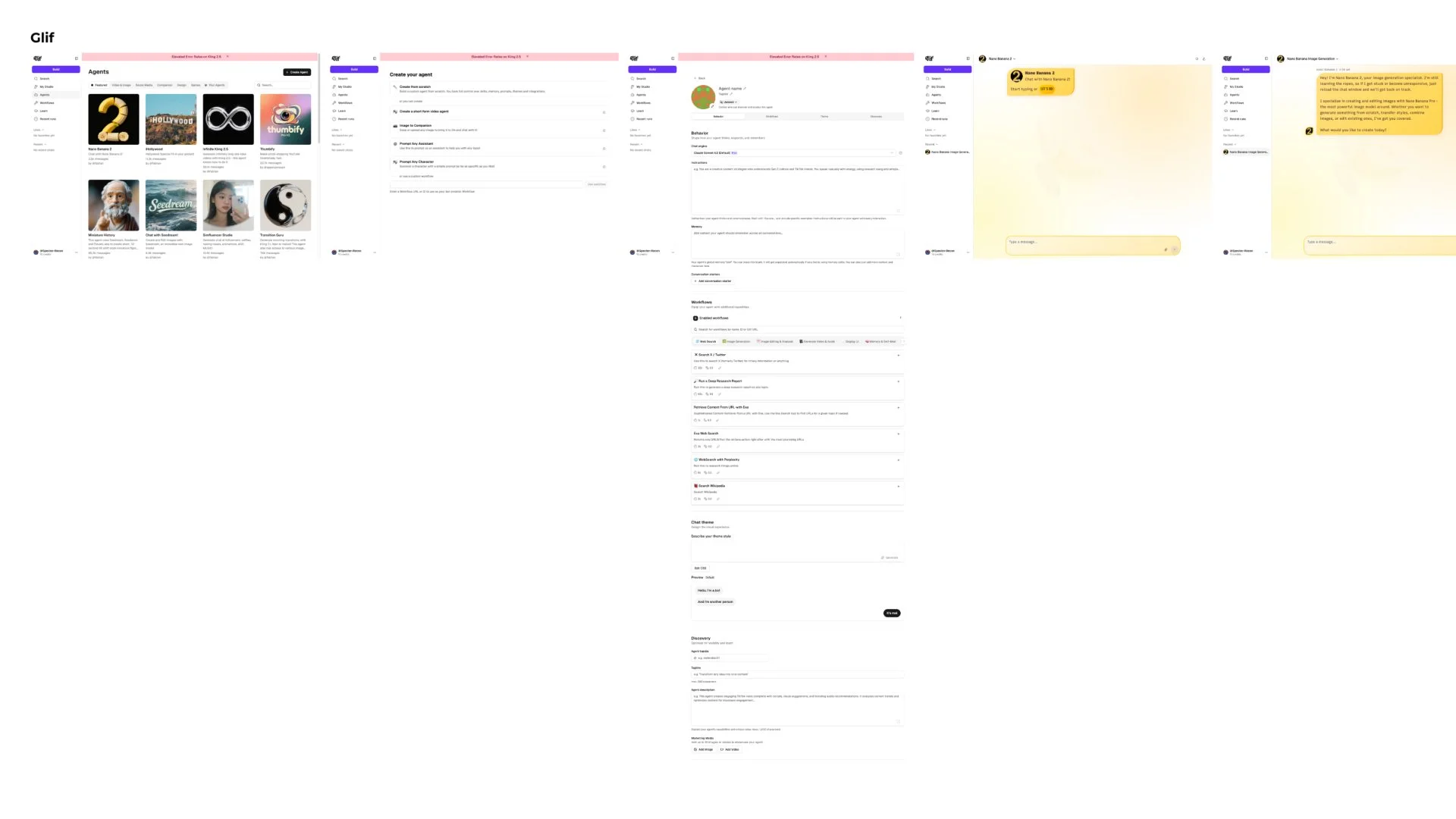

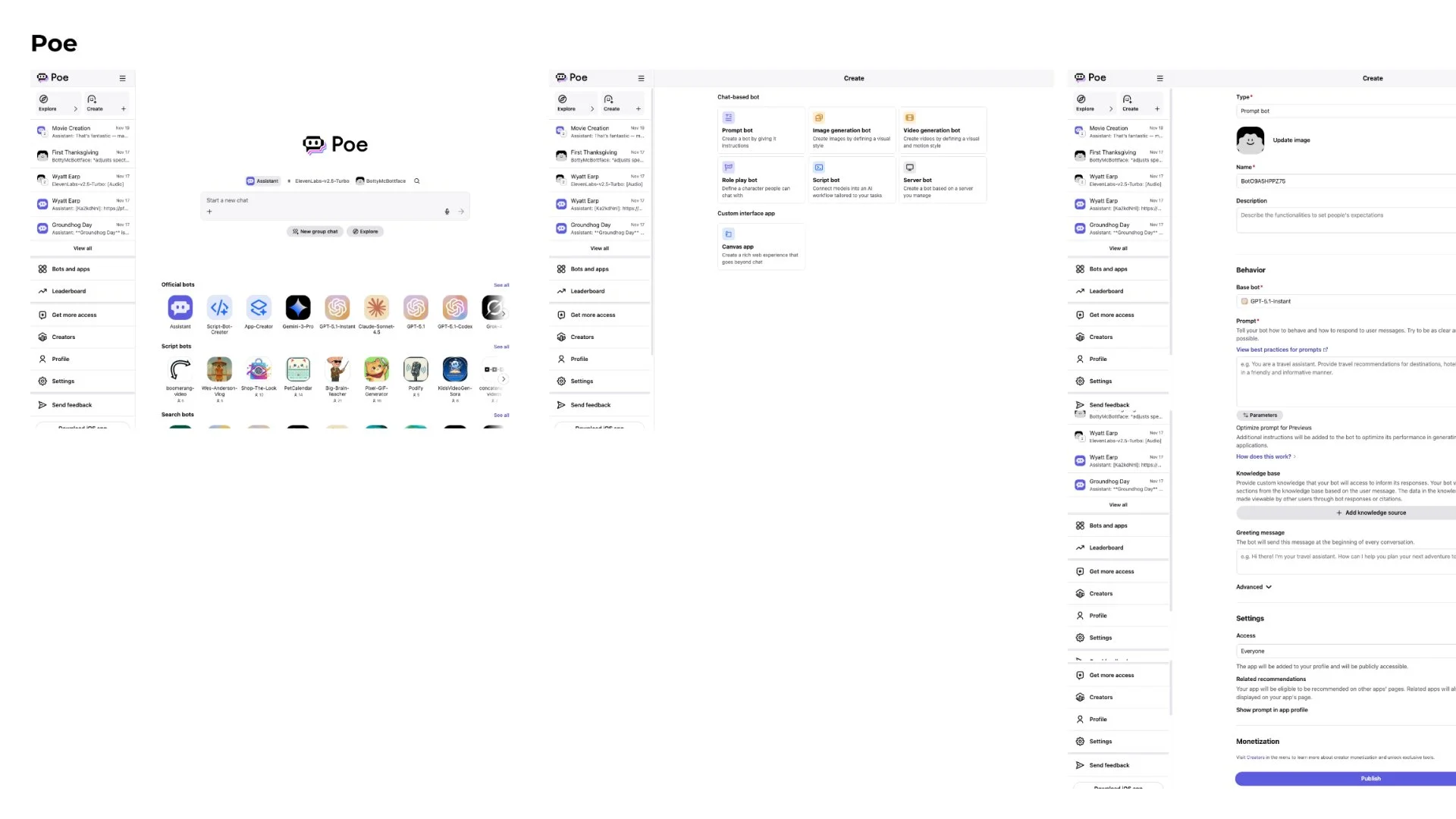

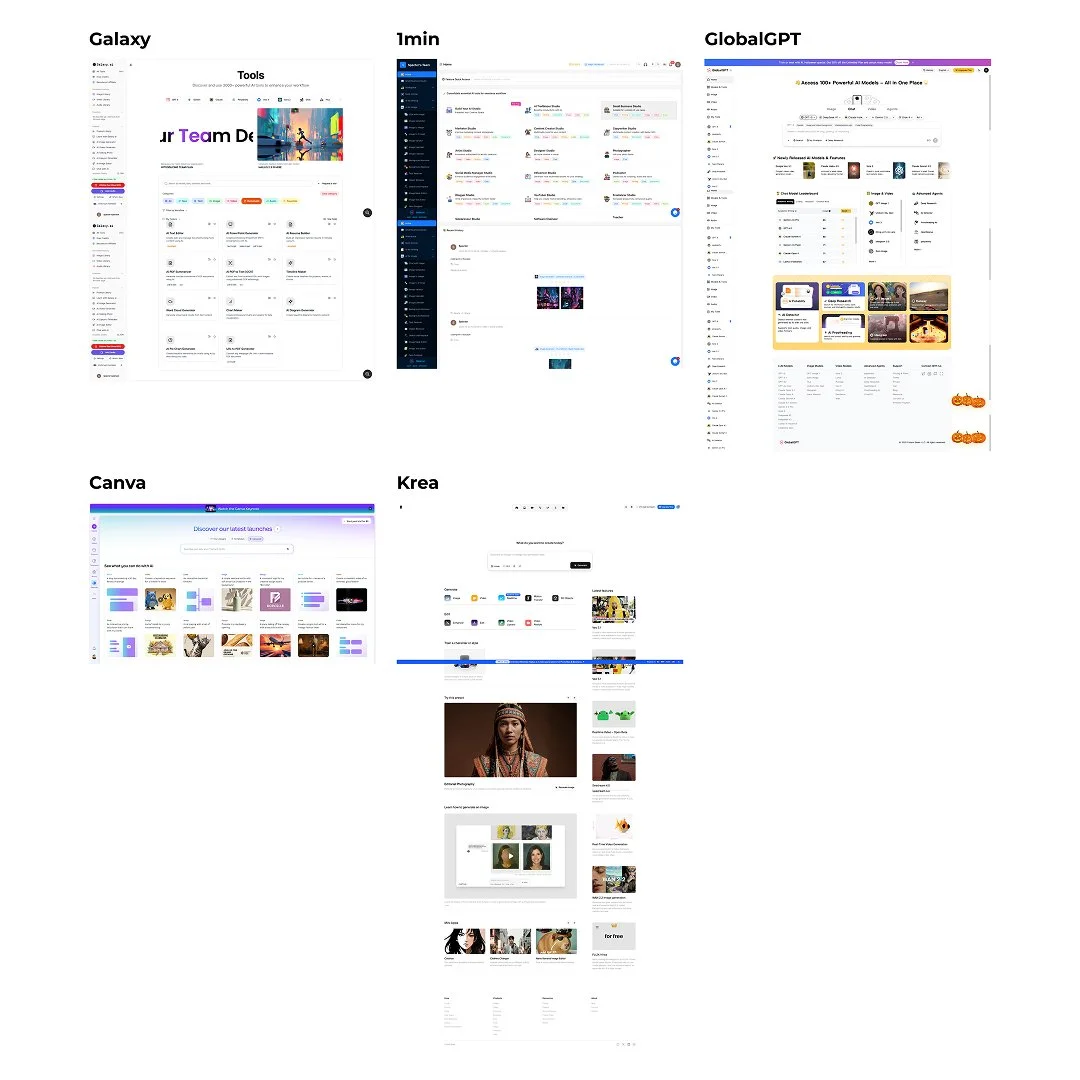

Competitive Analysis & Inspiration

To better understand the evolving AI landscape, I analyzed a range of existing platforms to identify patterns in how users interact with AI tools and to identify gaps in the experience.

Platforms like Poe focused on conversational interactions, allowing users to switch between models within a chat-based interface. While this approach made AI more accessible, it primarily focused on text-based workflows and offered limited support for multimodal or structured use cases.

Other platforms, such as Galaxy.ai, took a tool-based approach—surfacing a wide range of AI capabilities in a single place. While this provided breadth, the experience often lacked cohesion, requiring users to navigate and piece together workflows on their own.

Across both approaches, a consistent gap emerged:

Limited support for multi-step workflows

Minimal guidance on when and how to use different models

Fragmentation between tools, outputs, and user intent

These insights helped frame an opportunity for Cloudhands to move beyond both conversational-only and tool-aggregation models, and instead explore a more structured system—one that could unify multimodal capabilities while guiding users through workflows.

This exploration also informed key product directions, including the introduction of AI recipes (structured workflows), model comparison tools, and the concept of orchestration across different AI capabilities.

What Exists Today

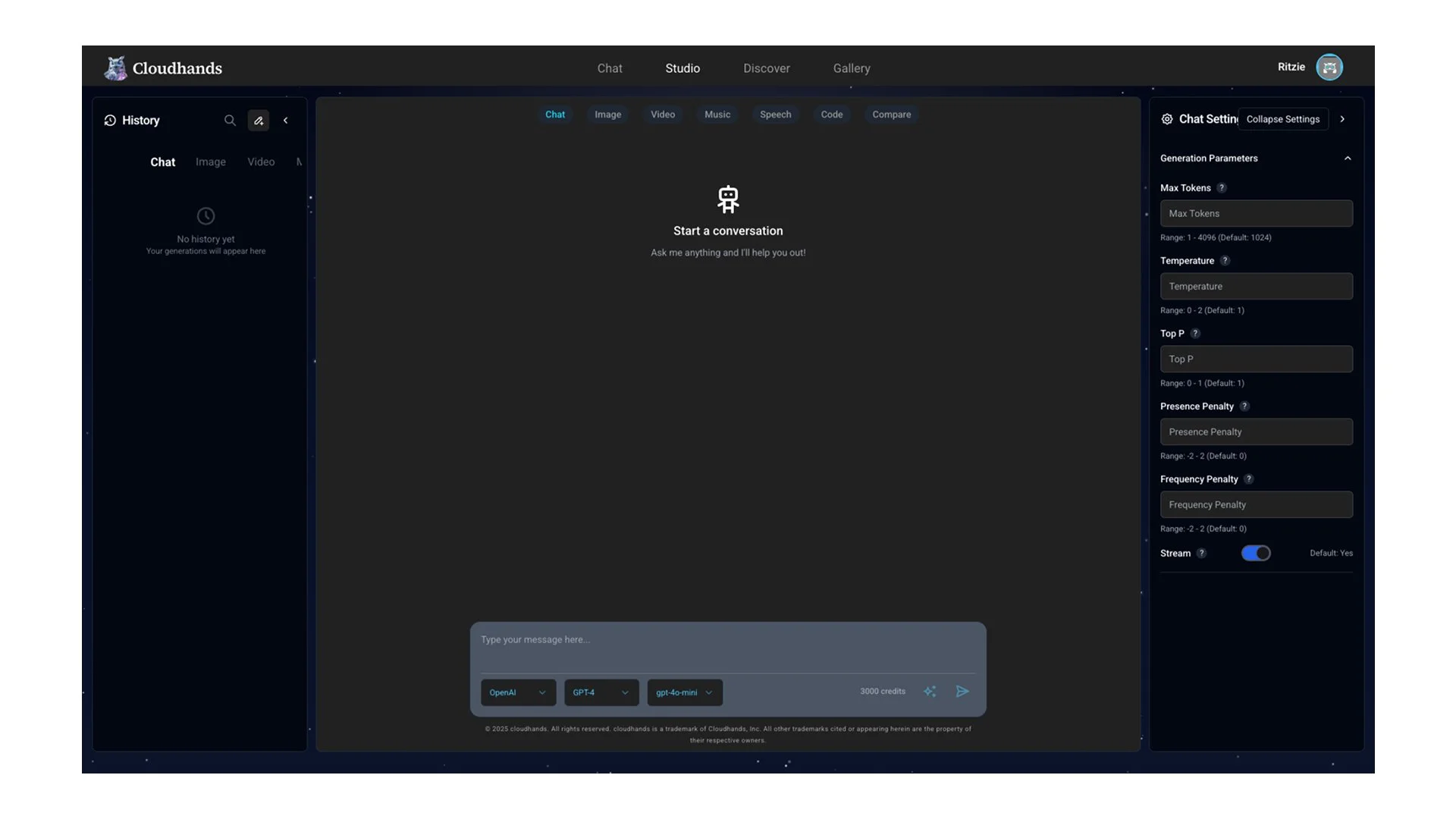

When I joined Cloudhands, the product consisted of a broad set of AI capabilities—including image, video, text, and other generative tools—but lacked a clear structure to guide user interaction.

Functionality was added rapidly, resulting in an experience that felt like a collection of features rather than a cohesive product. There was no strong hierarchy, navigation model, or defined workflows connecting these capabilities. As a result, users were left to interpret how different tools related to one another and how to use them together effectively.

This created several challenges:

Lack of direction: Users had no clear starting point or guidance on what to do next

Disconnected experiences: Tools existed independently, with little support for multi-step workflows

Increased cognitive load: The breadth of functionality made the product feel overwhelming rather than empowering

From a product perspective, this also made it difficult to prioritize features, define a roadmap, or align the team around a clear vision.

This initial state highlighted the need to move from a collection of capabilities to a more intentional, structured system that could guide users and scale effectively.

Concepts

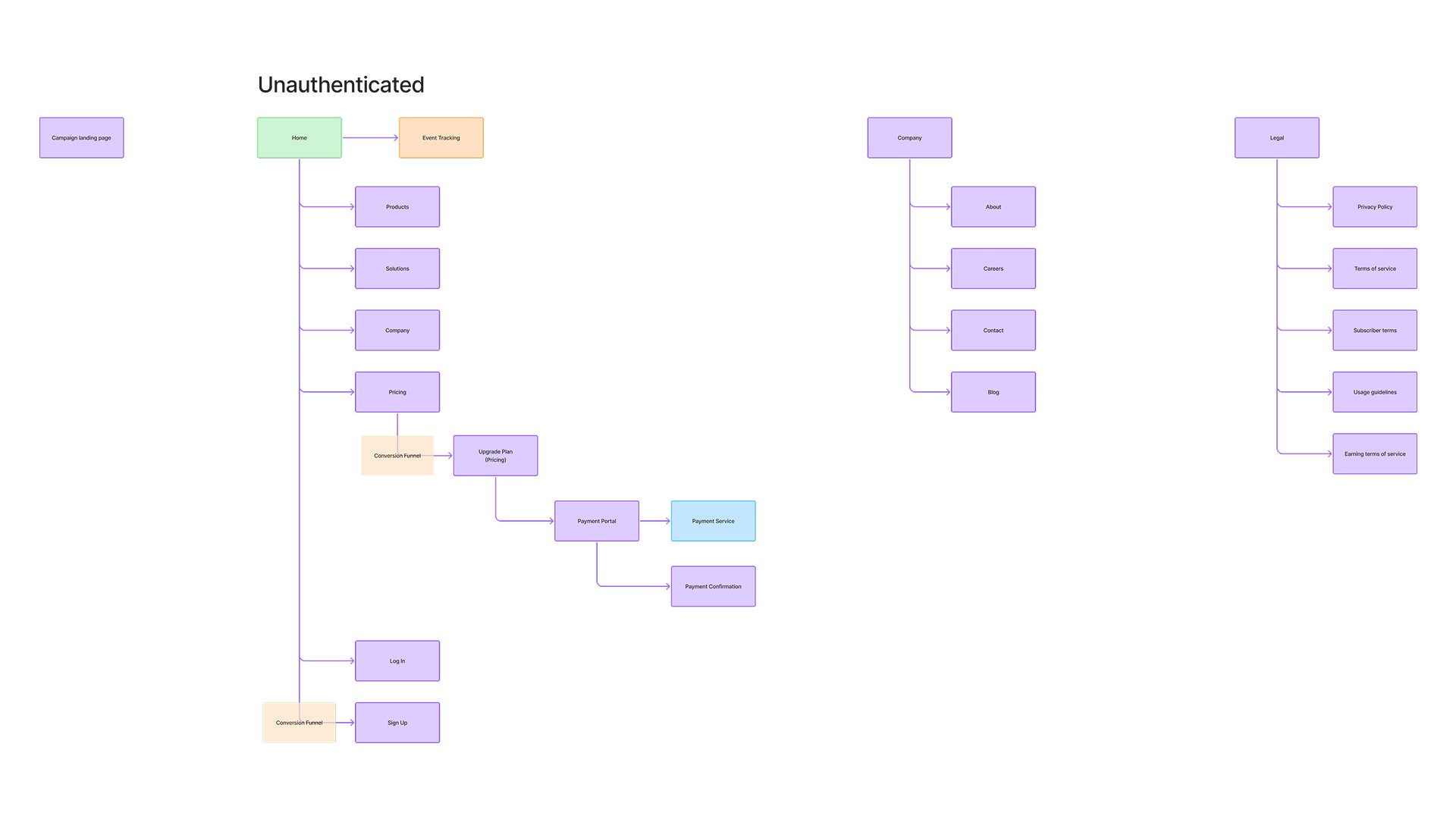

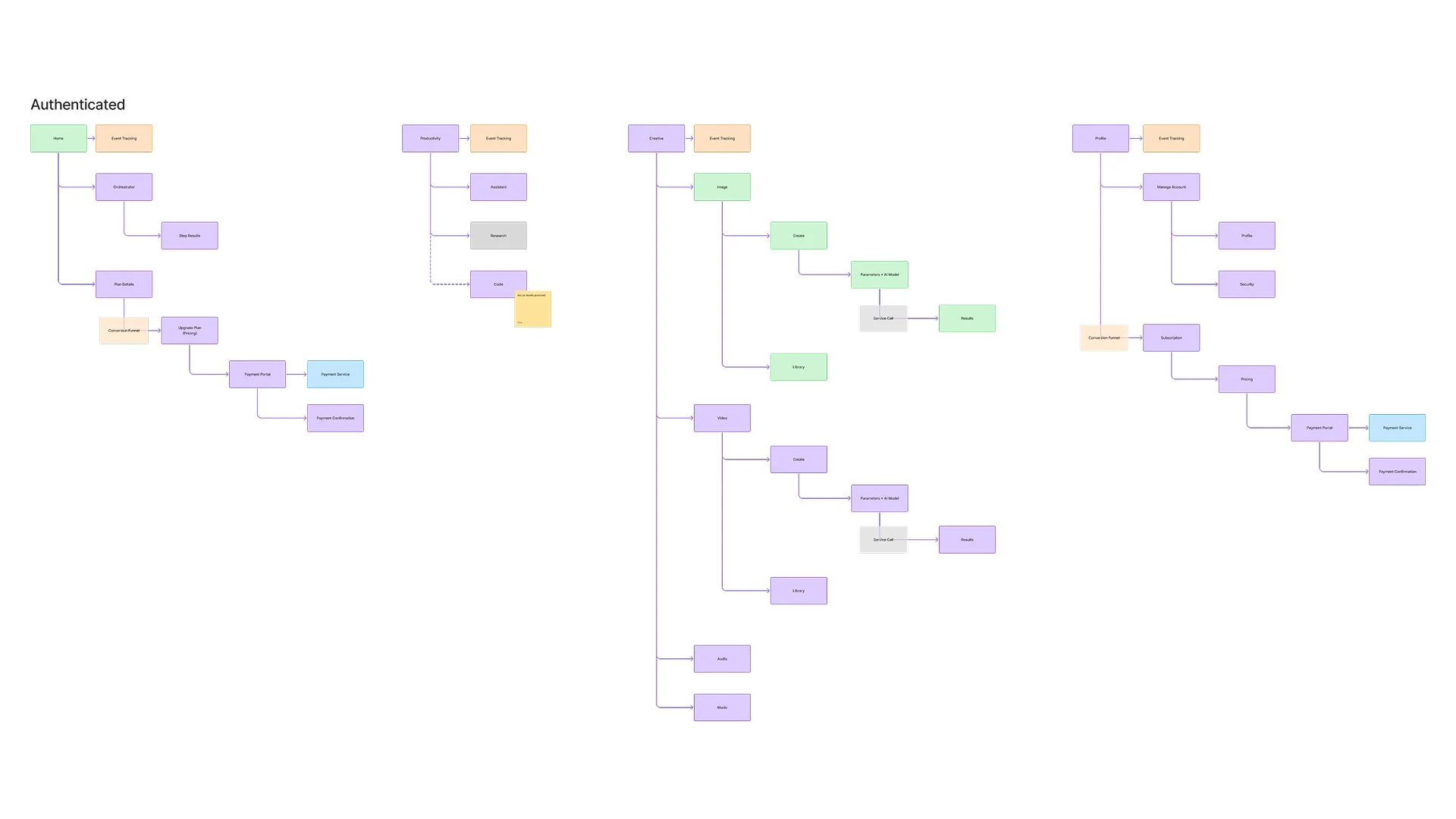

Information Architecture

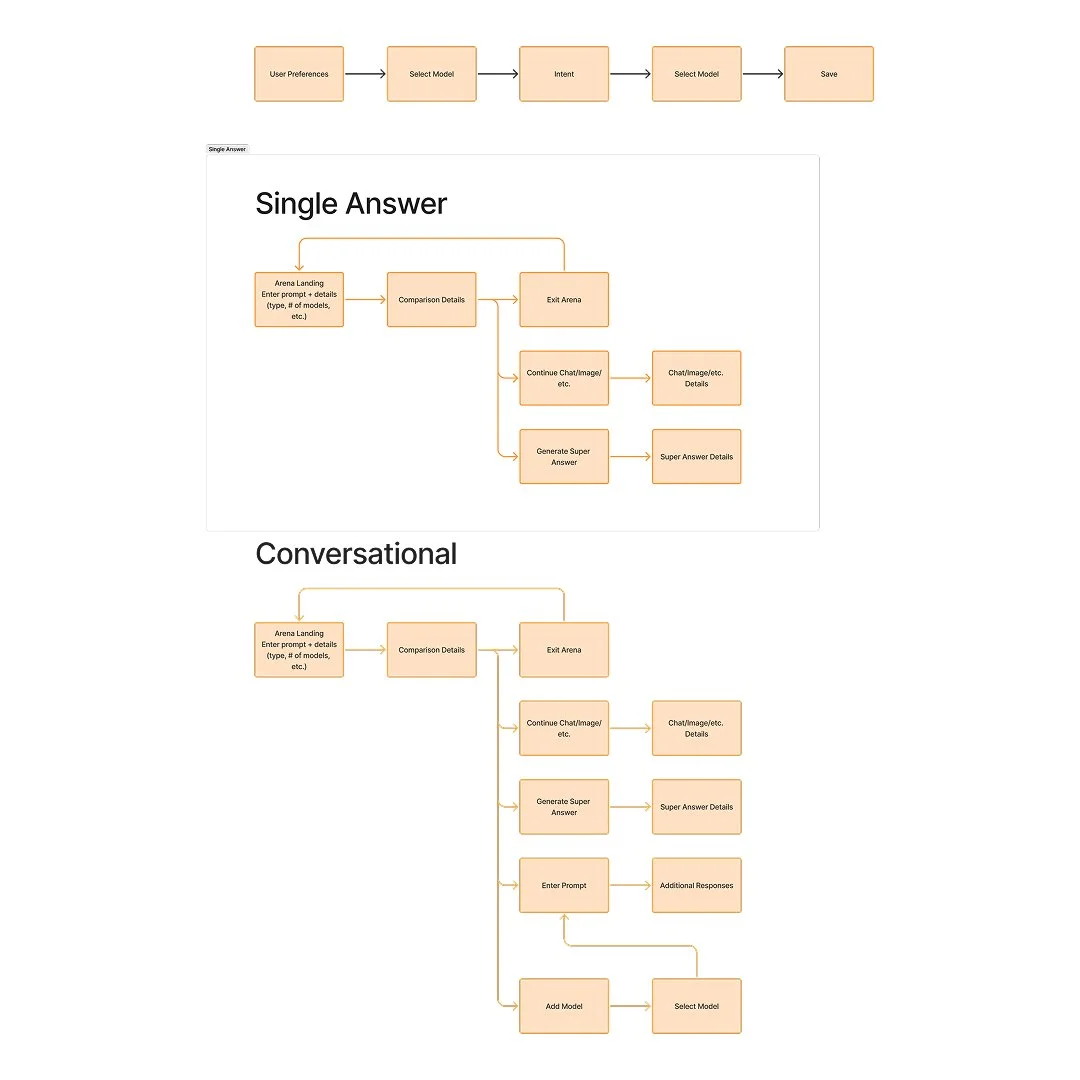

User Flows

Drawings

Concepts

Writing

Usability Testing

Hi-Fidelity Designs + Prototypes

Shared Components

File Layout

Design QA

Key Features

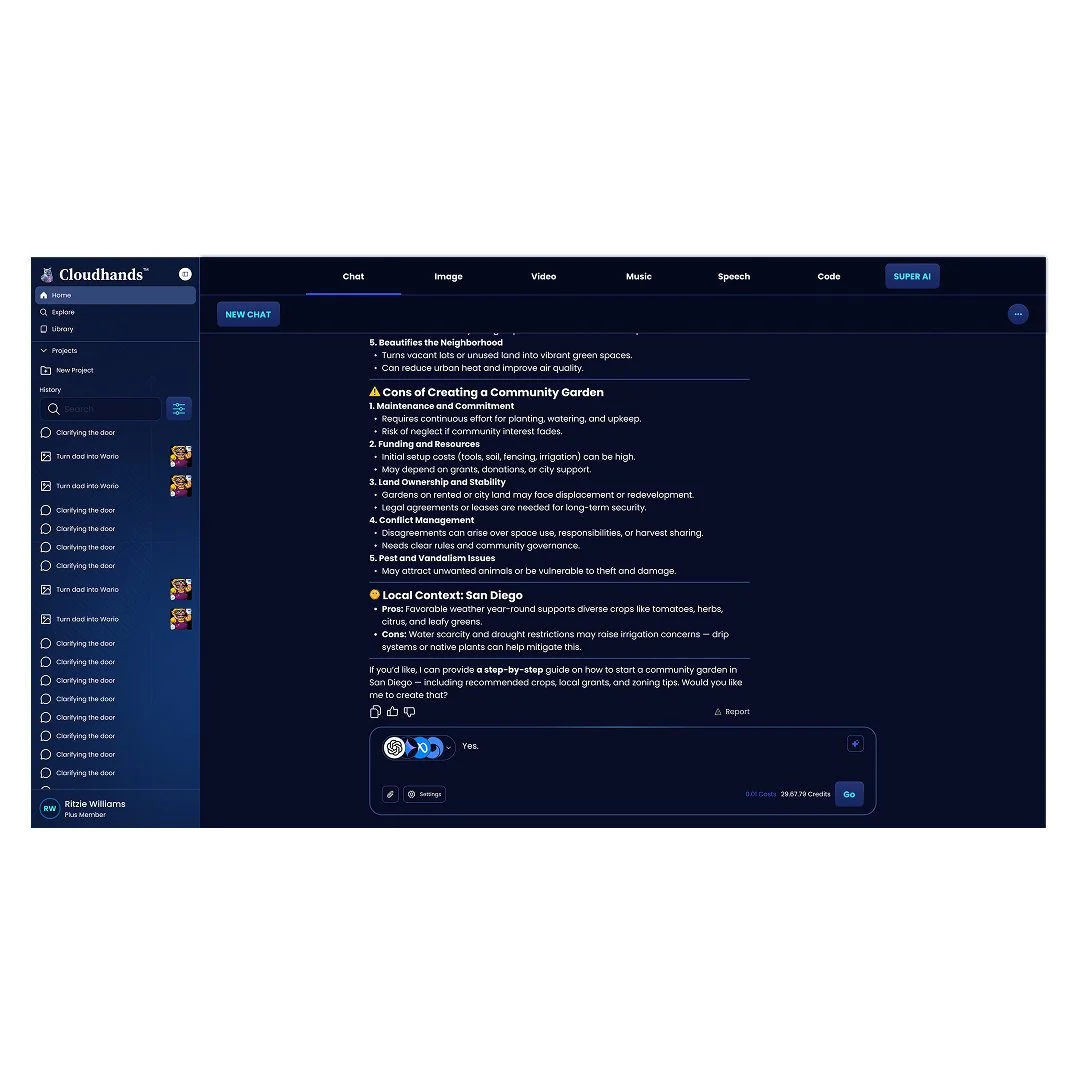

AI Assistant

A conversational interface that allows users to interact with AI in a more natural, guided way. The assistant serves as an entry point to the platform—helping users generate content, refine outputs, and navigate its capabilities without needing to understand the underlying models.

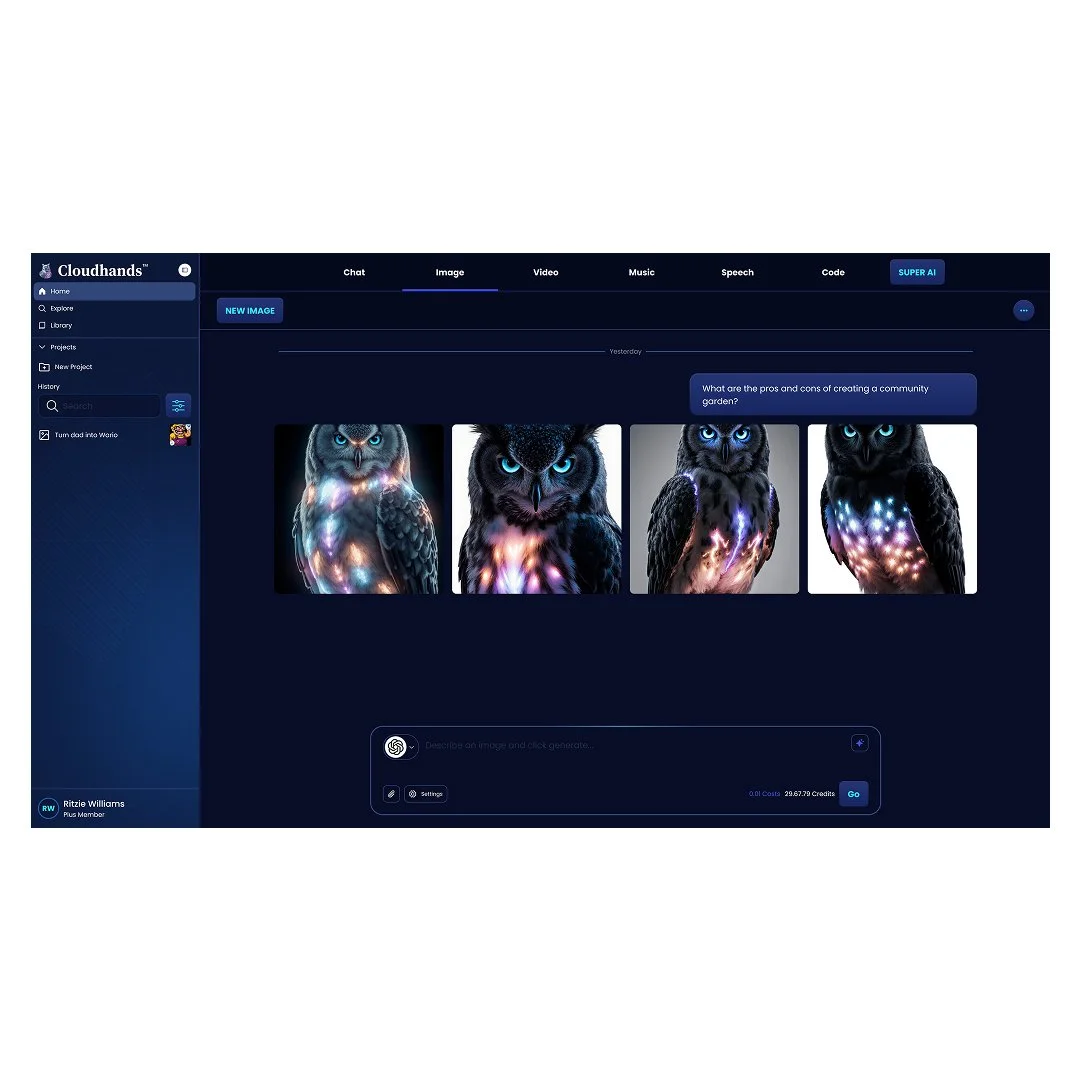

Image Generation

Enables users to create and edit images using AI models. Designed to support both quick generation and iterative refinement, allowing users to experiment, adjust prompts, and evolve outputs within a single workflow.

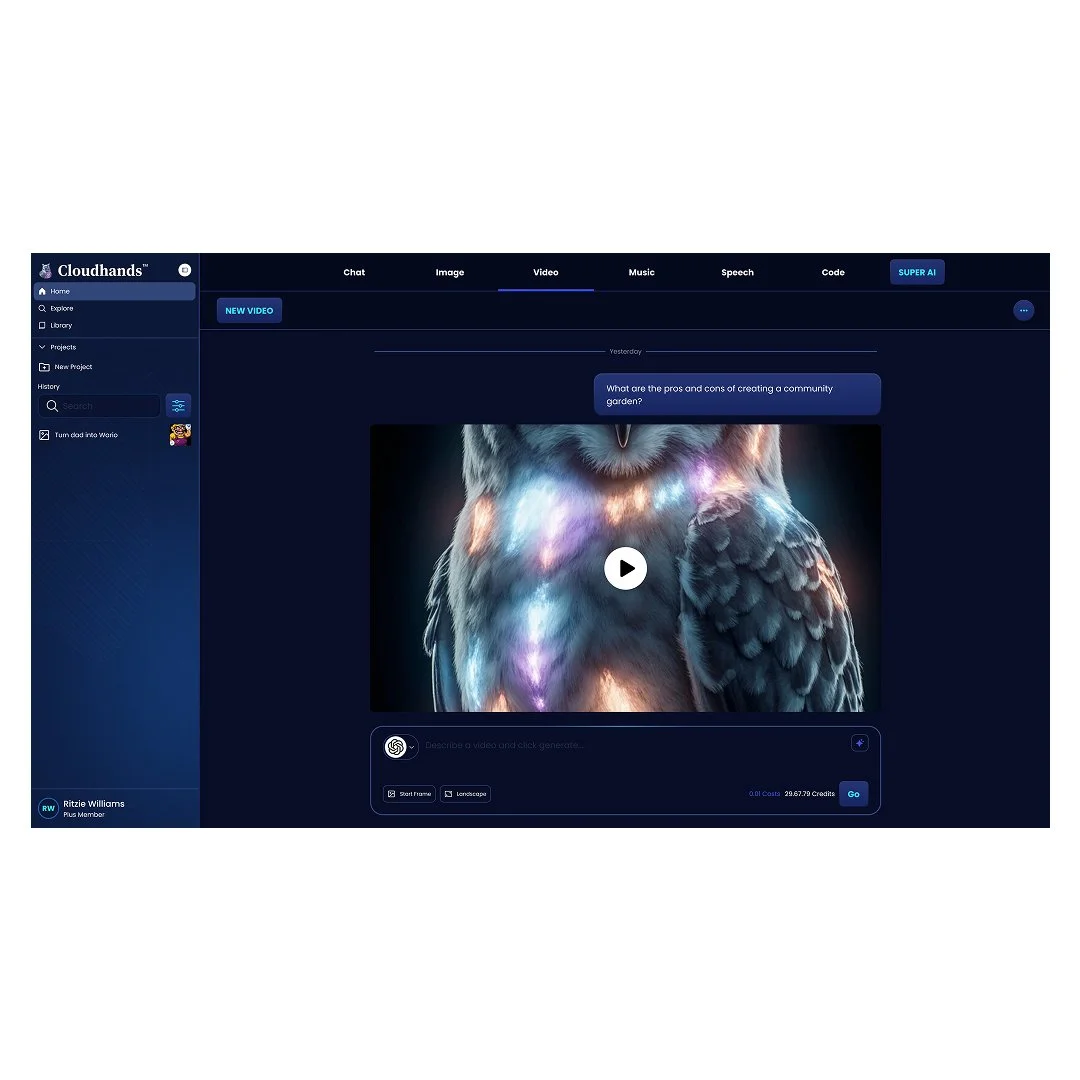

Video Generation

Provides tools for generating and editing video content using AI. This extends the platform’s capabilities to more complex, multi-step creative workflows, enabling users to move from concept to visual output more efficiently.

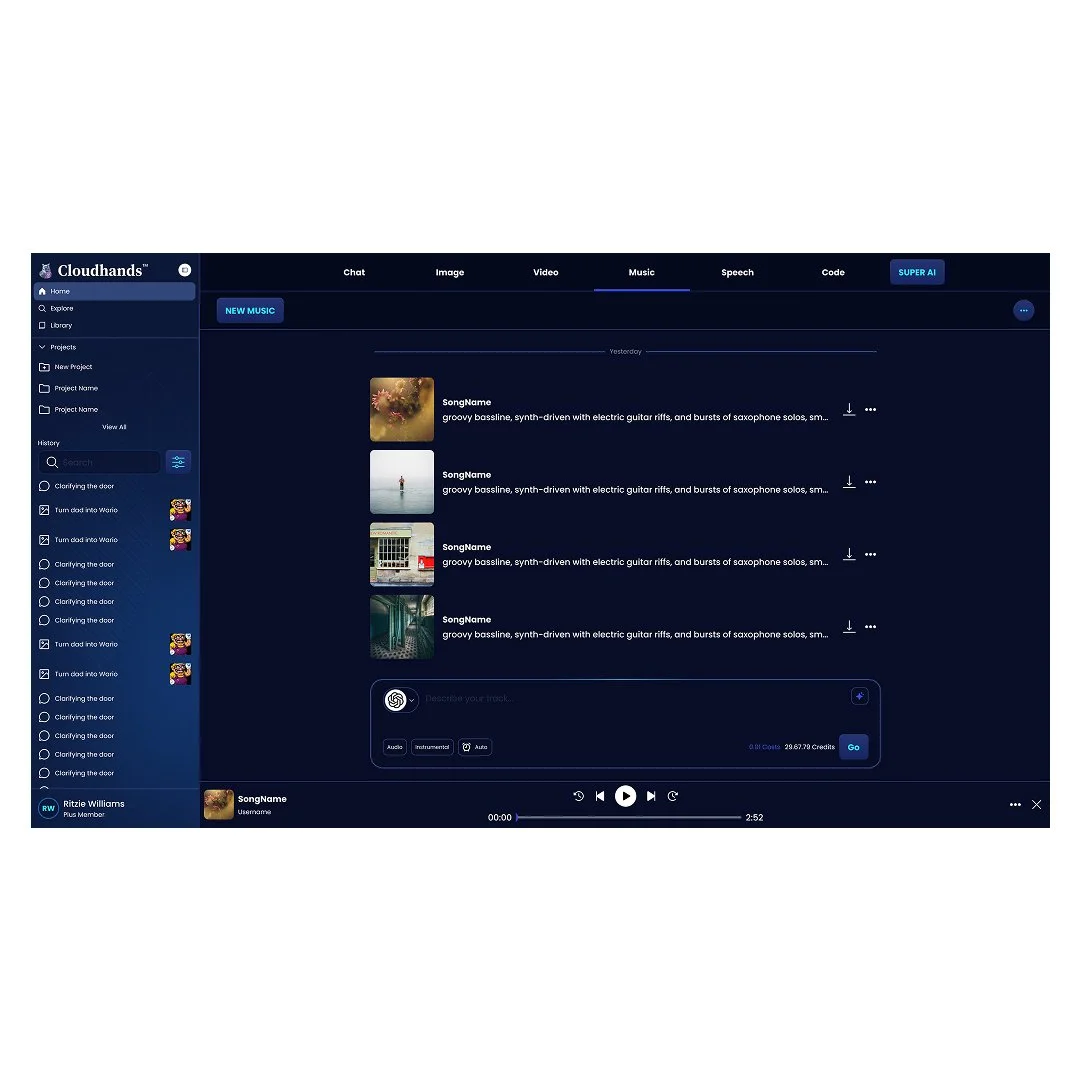

Audio Generation

Supports the creation and manipulation of non-speech audio, such as music or sound effects. This complements speech generation and enables a broader range of creative and production workflows.

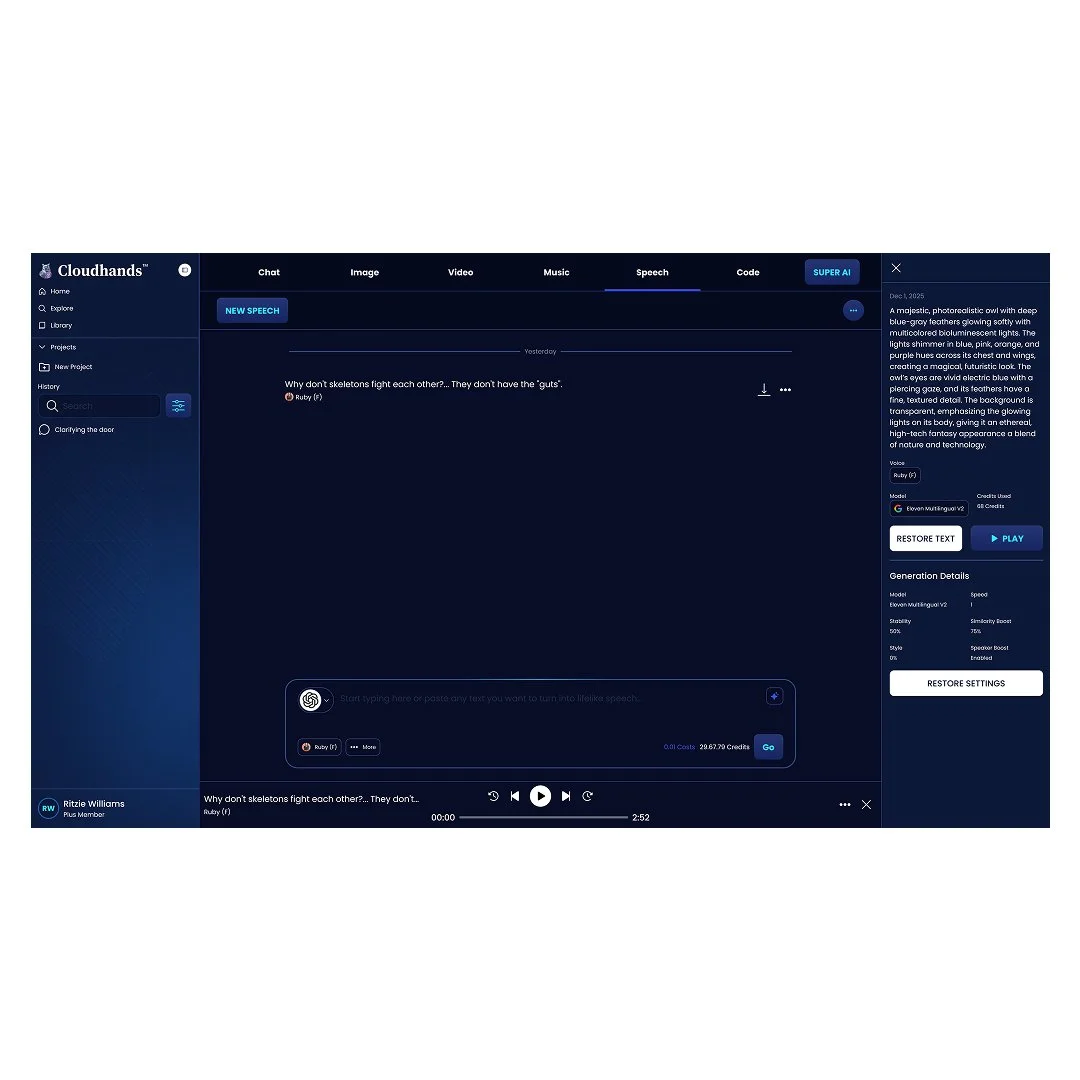

Speech Generation

Allows users to generate realistic voice outputs from text. This feature supports use cases such as narration, voiceovers, and audio content creation, expanding the platform’s reach into spoken media.

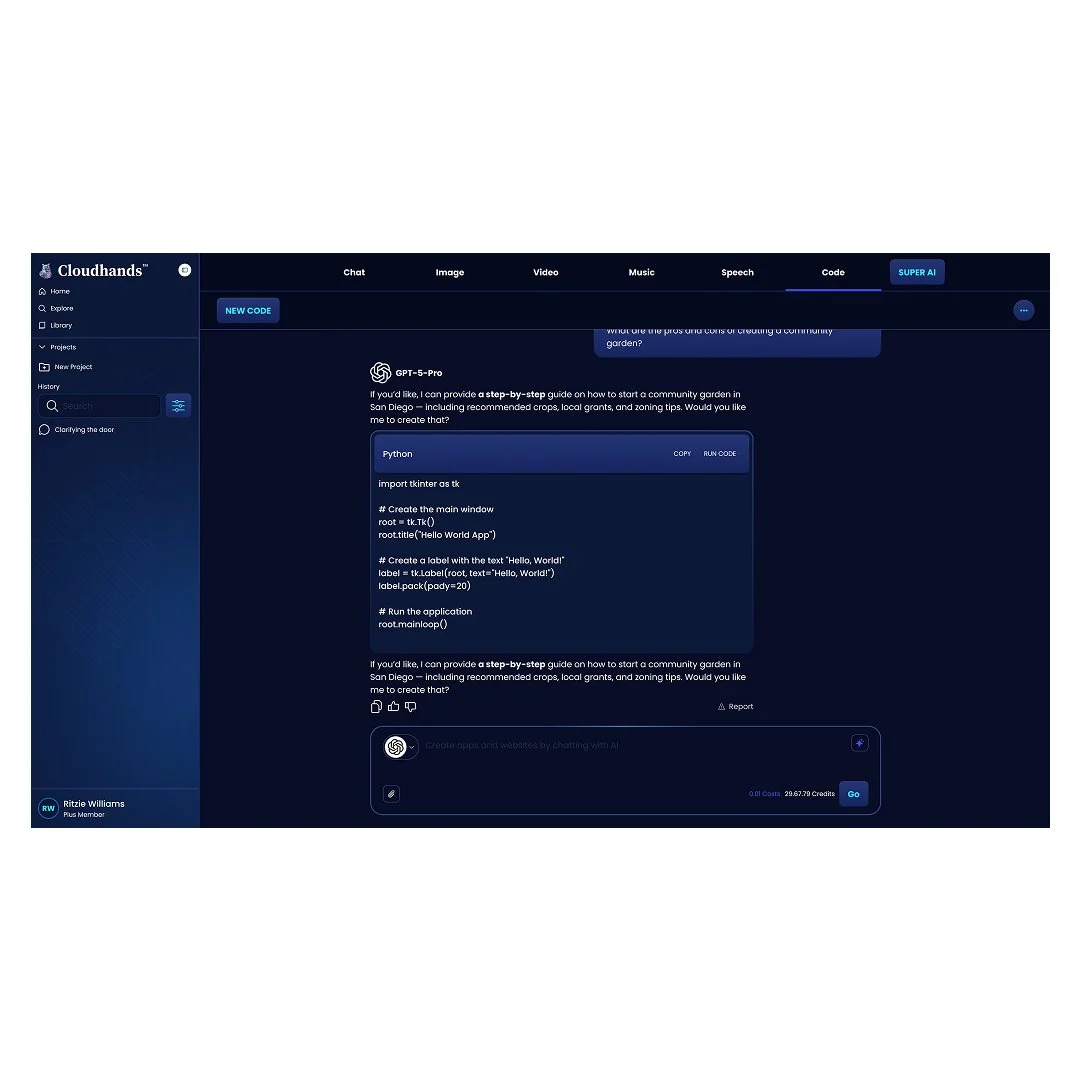

Code Generation

Enables users to generate and refine code through AI. Designed for both technical and non-technical users, this feature helps accelerate development tasks, prototyping, and problem-solving.

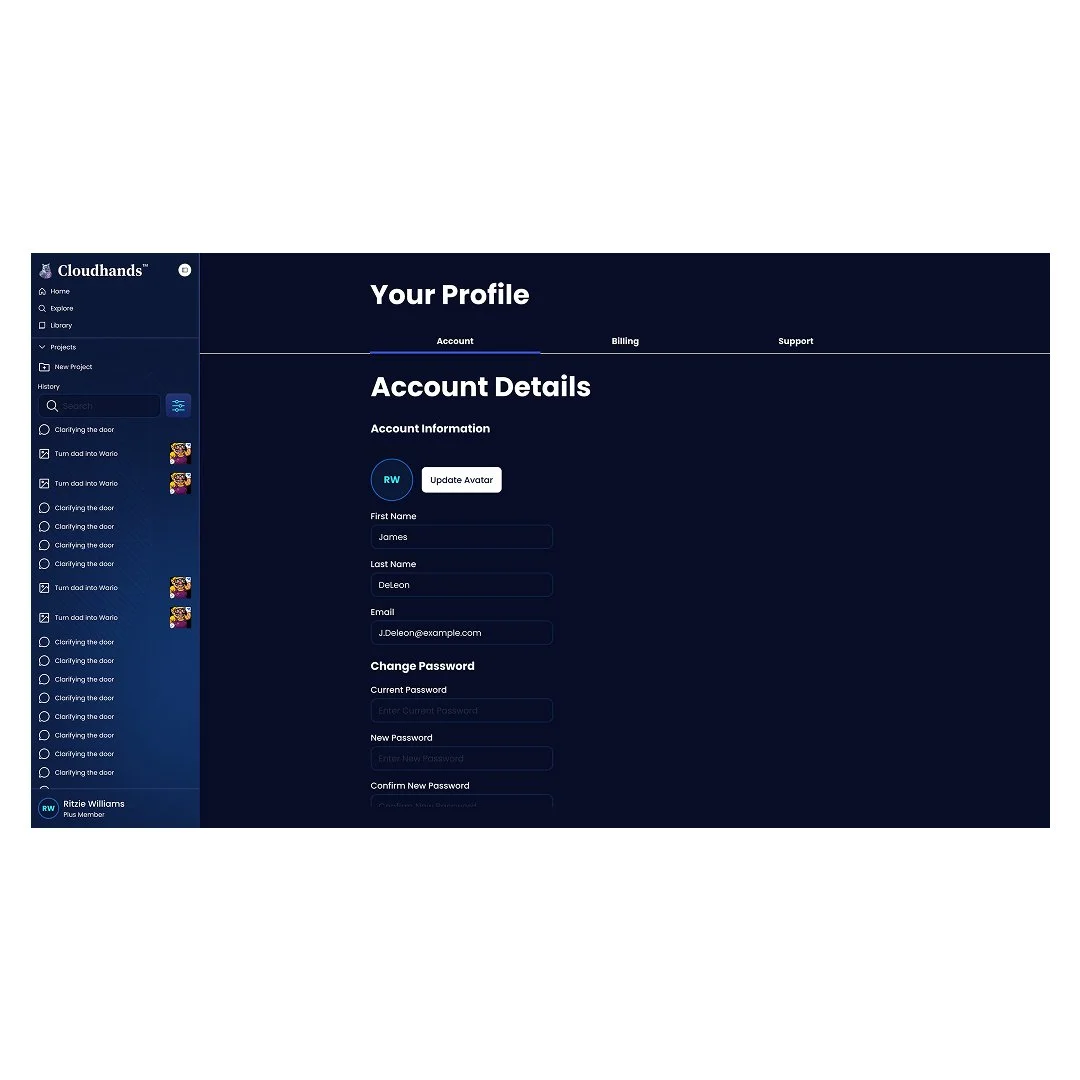

User Profile

Provides a centralized space for managing user activity, preferences, and generated content. This supports continuity across sessions and lays the foundation for personalization within the platform.

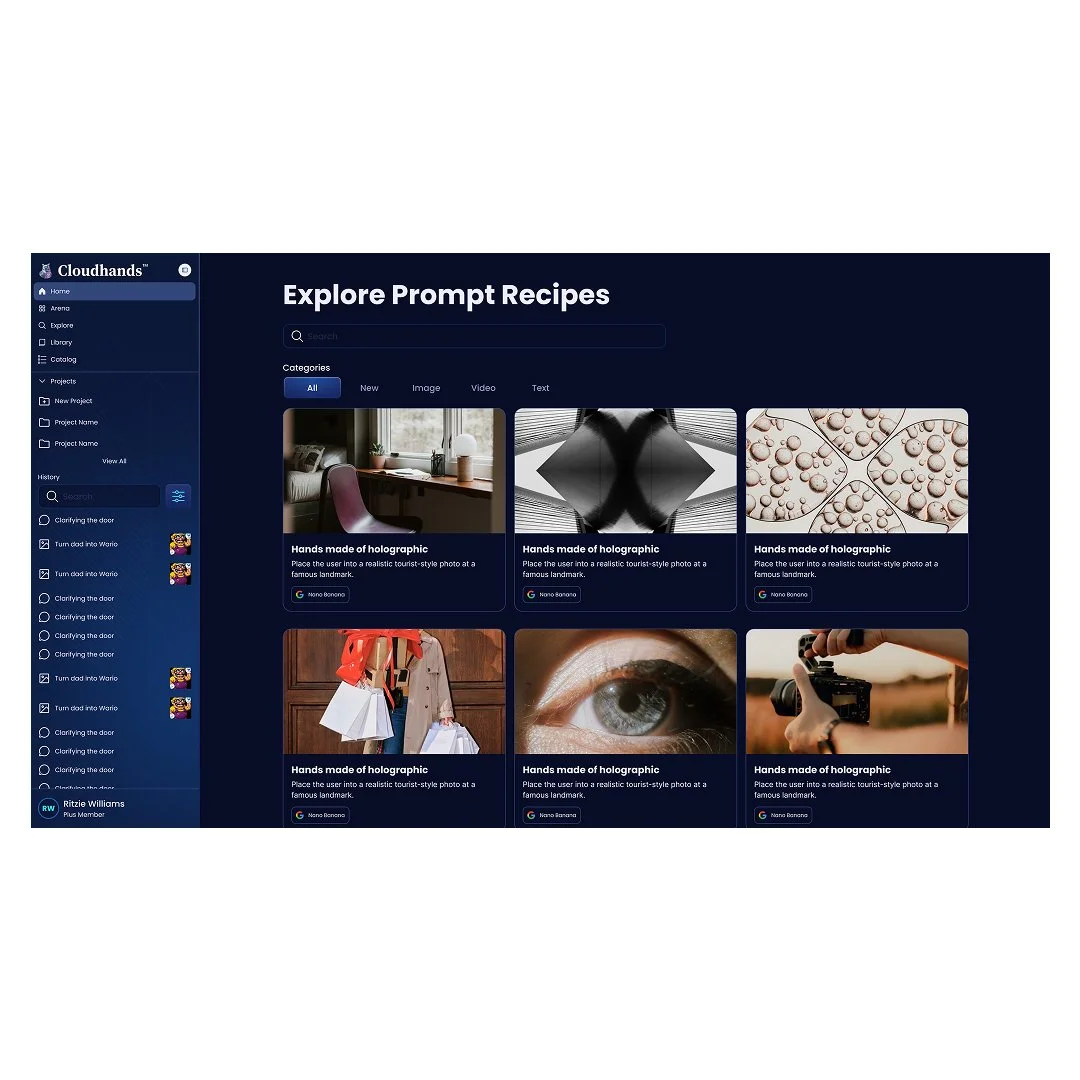

AI Recipes

Introduces structured, repeatable workflows that guide users through multi-step processes. Recipes reduce the need for trial-and-error by combining multiple AI capabilities into predefined sequences for common use cases.

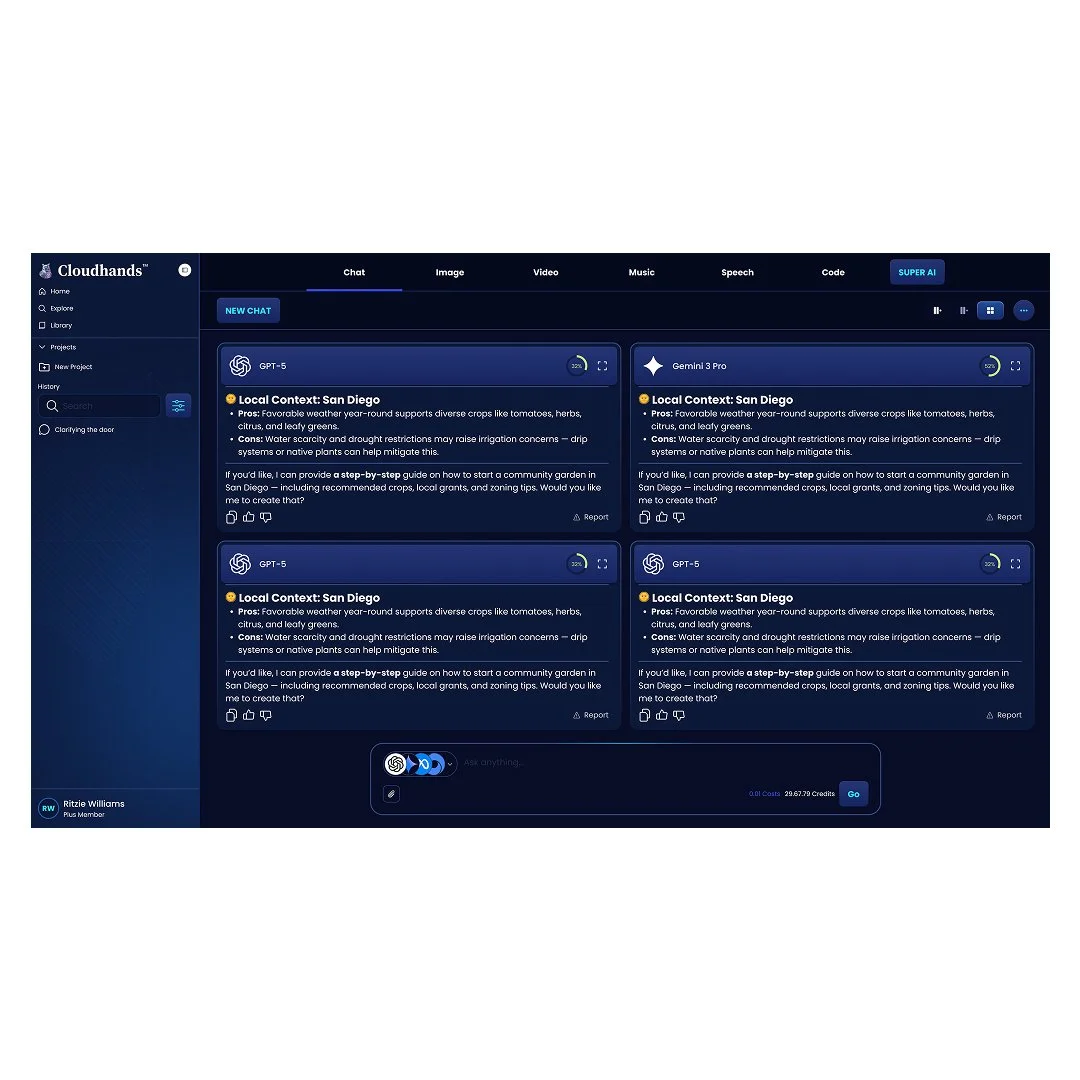

Compare AI Models (Arena)

Enables users to compare outputs from multiple AI models side-by-side. This helps users evaluate quality, understand differences, and make more informed decisions when selecting models.

Results & Conclusion

Through this work, Cloudhands evolved from a fragmented set of AI capabilities into a more structured, multi-modal platform and was brought to beta release.

The product established a cohesive foundation across key areas, including:

AI Assistant for conversational workflows

Image, video, speech, audio, and code generation

AI recipes to guide structured, repeatable workflows

Model management and comparison to support informed decision-making

User profiles to support personalization and continuity

This shift from isolated tools to a more unified system enabled users to more effectively navigate and utilize a wide range of AI capabilities within a single experience.

In parallel, I helped establish product and design processes that improved team alignment and execution—introducing agile workflows, implementing work tracking in ClickUp, and structuring the roadmap to support prioritization in a rapidly evolving space better.

While the company underwent layoffs before full-scale launch, the beta release demonstrated a clear product direction. It validated the core opportunity: moving beyond individual AI tools toward guided, multi-step workflows and model orchestration.

Key Takeaways

Structuring complexity is critical when working with emerging technologies like AI.

Clear workflows and guidance can significantly reduce user friction in multi-tool environments.

Early investment in product, process, and alignment enables faster, more effective execution.

Designing at the systems level is essential when building multi-modal platforms